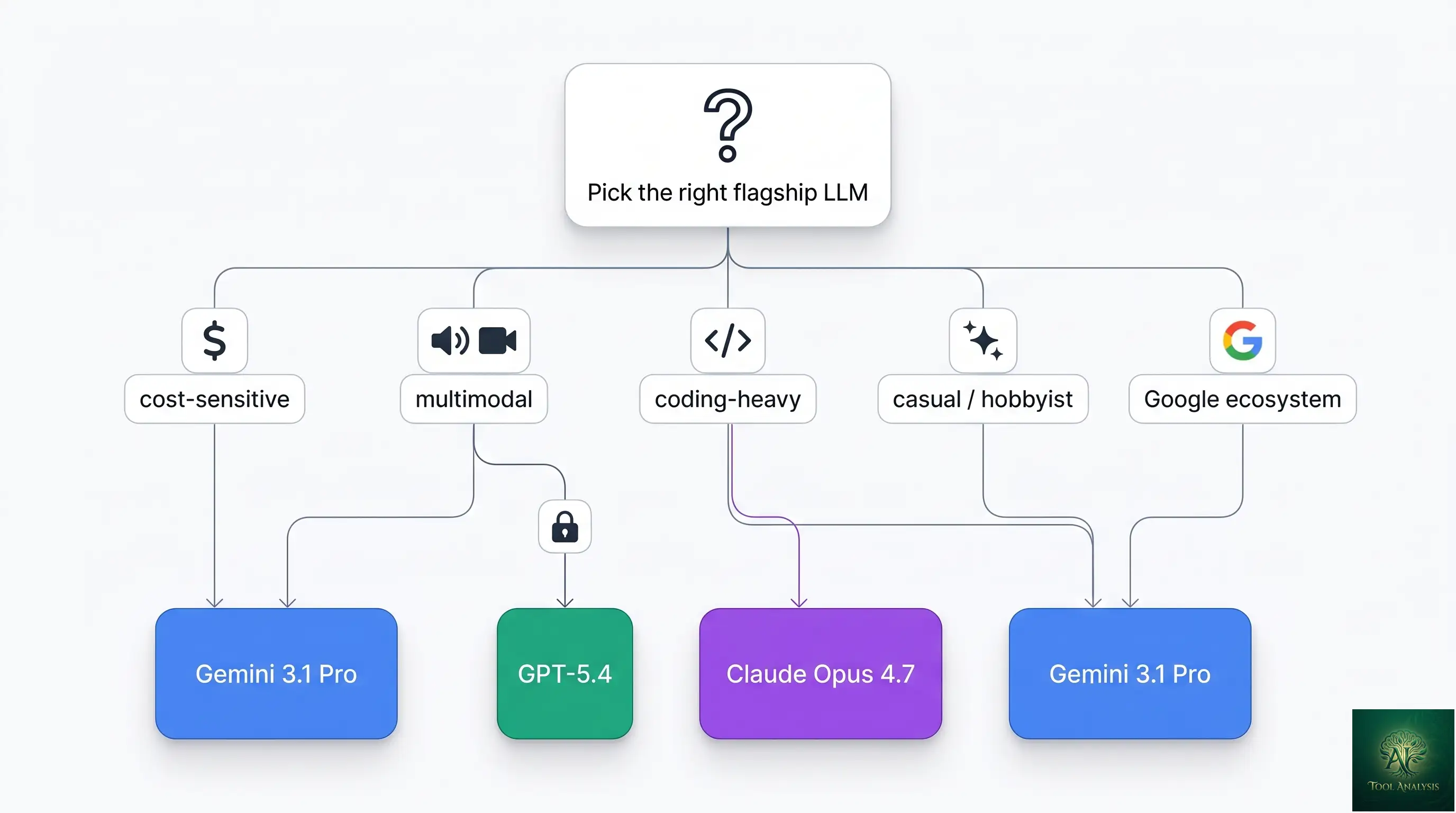

The flagship Gemini lineup in May 2026 is the strongest Google has ever shipped, and the genuine answer to “is it better than Claude or GPT” is more interesting than yes or no. Gemini 3.1 Pro ties Claude Opus 4.7 on the composite Artificial Analysis Intelligence Index (57 vs 57), beats both Claude and GPT decisively on price (1.7× cheaper than Opus on API), and is the only flagship that ships native voice and video processing in a single model. Where it loses ground: Claude Opus 4.7 still wins coding-heavy benchmarks by a meaningful margin (87.6% vs 80.6% on SWE-bench Verified). This review covers Gemini 3.1 Pro in its May 2026 state — what’s genuinely class-leading, where it’s competitive but not dominant, and the honest verdict for each user persona.

⚡ TL;DR – The Bottom Line

What This Is: Honest review of the Google Gemini lineup in May 2026 — Gemini 3.1 Pro flagship plus the Flash and Nano Banana siblings.

Best For: Cost-conscious teams, multimodal applications (voice/video), high-volume API workloads, and Google ecosystem users.

Pricing: Free in the Gemini app. Google AI Pro $19.99/mo. Ultra $249.99/mo. API at $2/$12 per 1M tokens (1.7× cheaper than Claude Opus 4.7).

Our Take: Tied with Claude Opus 4.7 on the Intelligence Index (57 = 57). Wins on price, native voice + video, 1M-context-at-GA. Loses on coding benchmarks. The right default flagship for most non-coding production work.

⚠️ The Catch: If your work is coding-heavy, Claude Opus 4.7’s 7-point SWE-bench lead is real and matters. Pick on workload.

📑 Quick Navigation

The Bottom Line: Should You Pick Gemini?

- You’re cost-sensitive or running high-volume API workloads: Gemini 3.1 Pro is the right call. At $2/$12 per million tokens, it’s 1.7× cheaper than Claude Opus 4.7 ($5/$25) and meaningfully cheaper than GPT-5.4 Pro. For chatbots, content generation, RAG pipelines, and summarization, the quality is more than adequate at the lower price.

- You need native voice or video processing: Gemini wins by default — it’s the only flagship model that handles audio and video natively in the API without preprocessing. Long-form video understanding (10+ minutes) works without manual frame extraction. Claude Opus 4.7 and GPT-5.4 don’t ship this in-model.

- You’re building inside the Google ecosystem: Gemini integrates natively with Workspace (Docs, Sheets, Gmail, Meet), Vertex AI for enterprise, Google AI Studio for prototyping, and the new Antigravity agentic IDE. If your team already lives in Google’s stack, friction is near zero.

- You’re a casual or hobbyist user: The free tier in the Gemini consumer app is the most generous in the flagship category — you get Gemini 3.1 Pro access (with daily limits), Nano Banana 2 image generation, and Live multimodal sessions for $0. ChatGPT Free and Claude.ai Free both put their flagship models behind a $20/month paywall.

- Your work is coding-heavy and reliability matters more than cost: Pay for Claude Opus 4.7 instead. The 7-point SWE-bench Verified gap (87.6% vs 80.6%) is real, and the 10-point gap on the harder SWE-bench Pro variant is decisive for multi-language work. Gemini’s coding ability is competitive, not best-in-class.

Skip Gemini if you’re locked into Anthropic for compliance reasons or if your buying decision is being driven entirely by an Anthropic-specific feature like the “xhigh” reasoning effort level on Opus 4.7. For everyone else, Gemini 3.1 Pro is at least worth a side-by-side eval against your current flagship — the price gap alone justifies the testing time.

What Changed Since Late 2025

- Gemini 3.0 Pro → 3.1 Pro on February 19, 2026. The 3.1 update brought stronger reasoning (GPQA Diamond now 94.3%), retained the 1M context, added native voice + video processing in the production API, and improved instruction-following on long-context tasks.

- SWE-bench Verified 80.6% — meaningful coding lift from the 3.0 baseline, but Claude Opus 4.7 still leads at 87.6%. Gemini concedes coding-leader status to Anthropic in exchange for cost leadership.

- Pricing repositioned to $2/$12 per 1M tokens — the deliberate cost-leader play. For high-volume API work this saves 56-57% per month vs Opus 4.7 across all workload tiers.

- 1M context now GA at standard pricing — Gemini retained its longstanding context-window advantage briefly, before Anthropic matched it (Opus 4.7’s 1M is in beta). Both flagships now ship 1M; Gemini was first to GA.

- Nano Banana Pro and Nano Banana 2 image generation bundled into the Gemini family. Nano Banana 2 is free in the Gemini app and produces images in 1-3 seconds; Nano Banana Pro (paid) is the high-quality variant that ranks among the top image generators in May 2026.

- Antigravity, Google’s agentic IDE, launched as the default coding interface for Gemini 3.1 Pro and now competes directly with Claude Code for the agentic-coding-tool slot. Powers a meaningful slice of the Gemini Pro use cases.

- Intelligence Index tied at 57. When 3.0 launched it briefly led Opus 4.6; Opus 4.7’s incremental improvements pulled even at the composite level.

Gemini 3.1 Pro at a Glance

| Spec | Gemini 3.1 Pro | Claude Opus 4.7 | GPT-5.4 |

|---|---|---|---|

| Vendor | Google DeepMind | Anthropic | OpenAI |

| Released | February 19, 2026 | April 2026 | March 2026 |

| Context window | 1M tokens (GA)FIRST TO GA | 1M tokens (beta) | 400K tokens |

| SWE-bench Verified | 80.6% | 87.6%WIN | 78.4% |

| GPQA Diamond (reasoning) | 94.3% | 94.2% | 92.1% |

| Intelligence Index | 57 | 57 | 54 |

| Native voice processing | YesUNIQUE | No | Via Realtime API only |

| Native video processing | YesUNIQUE | No | No |

| Input pricing (per 1M tokens) | $2.00CHEAPEST | $5.00 | $3.00 |

| Output pricing (per 1M tokens) | $12.00CHEAPEST | $25.00 | $15.00 |

| Free tier | Generous (Gemini app)BEST FREE | Limited (claude.ai) | Limited (chatgpt.com) |

| CLI agent | Gemini CLI | Claude Code | Codex CLI |

| IDE integration | Antigravity (default) | Claude Code (default) | VS Code, Cursor |

Key Features Decoded

1M-token context window (GA at standard pricing)

Gemini was the first flagship to ship 1M-token context at general availability without premium pricing — and as of May 2026 still has the cleanest GA implementation. Practical translation: you can fit roughly 1,500 pages of documentation, an entire mid-sized codebase, or a full month of customer support tickets into a single prompt without RAG infrastructure. Anthropic’s Opus 4.7 matches the spec but ships it in beta. For long-context production work, Gemini is the safer pick.

Native voice processing

Gemini 3.1 Pro processes audio natively in a single API call — no separate transcription step. You can send a voice file directly and get back transcription, content analysis, sentiment, speaker identification, or any reasoning task that depends on the audio content. The Live API extends this to real-time speech-to-speech conversation with sub-second latency. Claude Opus 4.7 doesn’t ship native audio; OpenAI’s GPT-5.4 handles audio only through the separate Realtime API.

Native video processing

Drop a video file directly into the API and Gemini 3.1 Pro processes it natively for content understanding, scene-by-scene reasoning, and Q&A about the visual content. Long-form videos (10+ minutes) work without manual frame extraction. This is the single most differentiating capability vs Claude and GPT in May 2026 — neither competitor handles video natively in the production API. For content moderation, video summarization, scene analysis, or media-heavy enterprise workflows, Gemini is the only flagship that just works.

Strong reasoning, weaker coding

Gemini 3.1 Pro scores 94.3% on GPQA Diamond — graduate-level reasoning across physics, chemistry, biology — essentially tied with Claude Opus 4.7 (94.2%). For research synthesis, scientific reasoning, and complex analytical work, the two are interchangeable. Coding is the gap: 80.6% on SWE-bench Verified vs Opus 4.7’s 87.6%. The 7-point gap is real and matters for autonomous coding agents, but for most non-coding production work the difference doesn’t surface.

Antigravity — Google’s agentic IDE

Antigravity launched in early 2026 as Google’s answer to Cursor and the broader Claude Code / Gemini CLI tooling space. It’s an IDE built around Gemini 3.1 Pro that supports agentic coding workflows, MCP tool integration, and project-level context. Currently free during the early-access period; expected to convert to a Google AI Pro/Ultra perk by late 2026.

Google Gemini’s Native Voice + Video: The Real Differentiator

If you remember nothing else from this Gemini review, remember this: native voice and video processing in a single model is what makes Gemini structurally different from Claude and GPT in May 2026. The competitors handle these modalities through separate APIs, separate models, or preprocessing pipelines. Gemini handles them in-model.

Practical scenarios where this matters most:

- Voice agents and live conversation: Real-time speech-to-speech via the Gemini Live API works at sub-second latency. Claude can’t do this; GPT requires the separate Realtime API which adds integration complexity.

- Video content analysis: Drop a 30-minute video into the API, ask “what happens at the inflection point of the customer’s frustration in this support call recording?” and Gemini reasons over the actual video. No frame extraction, no separate transcription, no preprocessing.

- Audio understanding beyond transcription: Music analysis, sound effect identification, ambient audio context, speaker emotion — Gemini does all of this in a single call.

- Multimodal RAG: Long-form video tutorials, podcast archives, and meeting recordings become first-class indexable content without separate transcription pipelines. The 1M context window combined with native video is unique in the flagship category.

The catch: native multimodal isn’t free. Video tokens consume context aggressively (1 minute of video ≈ 100-300 tokens depending on quality), and the per-token pricing applies. For a 30-minute video at 200 tokens/minute, that’s ~6,000 input tokens just for the video — at $2/1M tokens, $0.012 per video processed. Cheap enough for production-scale work; not free.

🔍 REALITY CHECK

Marketing Claims: “Gemini 3.1 Pro is the most capable AI model” (Google’s positioning around the Intelligence Index tie + multimodal lead).

Actual Experience: True for multimodal-native work and price-per-quality. False for coding, where Claude Opus 4.7 leads by 7 percentage points on SWE-bench Verified and 10 points on the harder Pro variant. The “most capable” framing depends entirely on which axis you measure. On the composite Artificial Analysis Intelligence Index Gemini ties Opus at 57 — a legitimate parity claim, but parity isn’t superiority. Engineering teams in particular should run their own evals on coding workflows; the benchmark gap in coding is real and matters.

Verdict: Gemini wins multimodal + price + free tier. Claude wins coding. They tie on composite intelligence. “Most capable” depends on your workload.

Benchmarks: Gemini vs Claude vs GPT

📊 Three-Way Flagship Benchmark Comparison (May 2026)

Higher is better. Composite Intelligence Index ties Gemini and Claude; coding gap goes to Claude.

The takeaway from the three-way comparison: Gemini and Claude tie on composite intelligence and reasoning, Claude wins coding, GPT trails both flagships on capability but maintains its market position through ecosystem reach (ChatGPT consumer scale, Microsoft enterprise integration). For pure capability per dollar, Gemini is the leader.

💡 Key Takeaway: For most teams, the right 2026 stack is Gemini 3.1 Pro for cost-sensitive and multimodal work, Claude Opus 4.7 for coding-heavy work. Routing requests by task captures both wins. The operational complexity of two API integrations is real but increasingly manageable.

📬 Find this Gemini review useful?

Get our weekly LLM reviews — Gemini updates, Claude releases, GPT model launches, and benchmark refreshes.

The Google Gemini Family: Pro, Flash, and Nano Banana

Gemini in May 2026 is no longer a single model — it’s a product line. Knowing which member to pick for which job saves money and improves results.

Gemini 3.1 Pro — the flagship (this review’s focus)

The capability ceiling of the family. 1M context, native multimodal, 80.6% SWE-bench, $2/$12 API. Use Pro when reasoning quality, multimodal capability, or long-context handling is load-bearing for the task.

Gemini 3 Flash family — the cost tier

Faster, cheaper, slightly less capable. The right default for high-volume API work where Pro’s capability isn’t load-bearing — chatbots, content generation, classification, summarization, simple agent loops. We covered this family in detail in the Gemini Flash review; the short version is that Flash handles maybe 70% of typical production LLM workloads at ~10% of Pro’s API cost.

Nano Banana Pro / Nano Banana 2 — image generation

Google’s image generation models, integrated directly into the Gemini app and API. Nano Banana 2 generates images in 1-3 seconds and is free in the Gemini app — the fastest and most accessible image generator in the flagship category. Nano Banana Pro is the higher-quality variant that ranks among the leading image generators in May 2026 (covered in detail in our best AI image generators roundup and the Nano Banana Pro review).

Pricing & Access

| Tier | Price | What You Get | Best For |

|---|---|---|---|

| Gemini app (free)FREE | $0 | Gemini 3.1 Pro with daily limits, Nano Banana 2 image gen, Live multimodal sessions, 5GB storage | Casual users, hobbyists |

| Google AI Pro | $19.99/mo | Higher Pro limits, Nano Banana Pro, Live longer sessions, 2TB storage, Workspace integration | Power users, prosumers |

| Google AI Ultra | $249.99/mo | Highest Pro limits, all premium features, Antigravity priority, 30TB storage, advanced video | Professionals, content creators |

| API (pay-per-use)FOR DEVS | $2 input / $12 output per 1M tokens | Direct API access via Google AI Studio + Vertex AI; scales with usage | Developers, production workloads |

| Vertex AI Enterprise | Custom | API plus enterprise features: VPC, audit logs, customer-managed encryption, SLAs | Enterprise, regulated industries |

The free tier is the most generous in the flagship category — both ChatGPT Free and Claude.ai Free put their flagship models behind paywalls; Gemini gives you actual Pro access at $0 with reasonable daily limits. For casual users this is genuinely worth using. For developers, the API pricing at $2/$12 per 1M tokens is the cheapest of the three flagships and saves 56-57% vs Claude Opus 4.7 on the same workload pattern.

Who Should Use Gemini (and Who Shouldn’t)

- Cost-sensitive teams or startups: Gemini 3.1 Pro at $2/$12 per 1M tokens saves 56-57% per month vs Claude Opus 4.7 across all workload patterns. The right default flagship for cost-conscious work.

- High-volume API workloads (RAG, content generation, summarization): The price advantage compounds dramatically at scale. The capability gap vs Claude on these workloads is small enough not to matter; the cost gap is large enough that it does.

- Multimodal applications (voice, video, real-time): Native voice + video processing is unique to Gemini in the flagship category. If your workflow involves audio, video, or live multimodal interaction, Gemini is the only choice that just works.

- Google ecosystem teams: Native integration with Workspace, Vertex AI, AI Studio, and Antigravity. If your stack is already Google, friction is minimal.

- Casual and hobbyist users: The free tier in the Gemini app is the most generous in the flagship category. Real Pro access plus image generation plus Live sessions for $0 is hard to beat.

- Long-context production work (1500+ page docs, large codebases): Gemini’s 1M context is GA at standard pricing — Anthropic’s 1M is still in beta. For mission-critical long-context work, Gemini is the safer choice.

- Skip Gemini if: Your work is coding-heavy and the 7-point SWE-bench gap matters (use Claude Opus 4.7), you’re locked into the OpenAI ecosystem (use GPT-5.4), or you specifically need a vendor outside Google for compliance reasons.

🔍 REALITY CHECK

Marketing Claims: “Gemini integrates seamlessly with Workspace” (Google’s positioning around Docs / Sheets / Gmail / Meet integration).

Actual Experience: True for the basics — Gemini-in-Docs, Gemini-in-Sheets, Gemini-in-Gmail all work and are genuinely useful for low-effort drafting and summarization. Less true for advanced agentic workflows where the integration is still surface-level. The deep “Gemini orchestrates your Workspace data via MCP-style tool calls” vision is partially shipped, partially aspirational. For day-to-day drafting and summarization the integration is excellent; for full agentic Workspace orchestration, expect to wait through 2026.

Verdict: Workspace integration is real and useful at the surface level. The deep agentic version is partially shipped. Set expectations accordingly.

FAQs

Is Gemini better than ChatGPT in 2026?

On capability — yes. Gemini 3.1 Pro scores 57 on the Artificial Analysis Intelligence Index vs GPT-5.4 at 54. On reasoning (94.3% vs 92.1% on GPQA Diamond), context window (1M vs 400K), pricing ($2/$12 vs $3/$15), and multimodal (native voice + video vs OpenAI’s separate Realtime API), Gemini leads. ChatGPT still wins on consumer scale and ecosystem reach. For pure capability per dollar, Gemini.

Is Gemini better than Claude in 2026?

Tied at the composite level — both score 57 on the Intelligence Index. Gemini wins on price (1.7× cheaper), multimodal (native voice + video), and free tier. Claude wins on coding (87.6% vs 80.6% SWE-bench Verified). Pick based on workload, not on a single “better” claim. Most teams in 2026 use both, routing requests to whichever model is best per task — see our Claude Code vs Gemini CLI comparison for the developer-tooling angle.

Is Gemini free?

Yes — the Gemini consumer app gives you Gemini 3.1 Pro access (with daily limits), Nano Banana 2 image generation, and Live multimodal sessions for $0. It’s the most generous free tier in the flagship category. Both ChatGPT Free and Claude.ai Free put their flagship models behind paywalls; Gemini doesn’t. For developers, the API has a free tier with daily request limits via Google AI Studio.

What’s the difference between Gemini Pro and Gemini Flash?

Pro is the flagship — highest capability, highest API cost, full multimodal. Flash is the cost tier — faster, ~10× cheaper API, slightly lower capability ceiling but handles ~70% of typical production LLM workloads adequately. Use Pro when capability is load-bearing; use Flash for high-volume work where price-per-token matters more than the marginal capability gain. Detailed comparison in our Gemini Flash review.

Can Gemini really process video natively?

Yes. Drop a video file directly into the API and Gemini 3.1 Pro processes it natively for content understanding, scene-by-scene reasoning, and Q&A. Long-form videos (10+ minutes) work without manual frame extraction. Claude Opus 4.7 cannot do this; OpenAI’s GPT-5.4 cannot either. This is the single most differentiating capability in the May 2026 flagship category.

What is Antigravity?

Google’s agentic IDE built around Gemini 3.1 Pro — the equivalent of Anthropic’s Claude Code or Cursor’s IDE for the Google ecosystem. Supports MCP tool integration, project-level context, autonomous coding workflows. Currently free during early access; expected to convert to a Google AI Pro/Ultra perk by late 2026.

How much does the Gemini API cost?

$2 input / $12 output per 1M tokens for Gemini 3.1 Pro via Google AI Studio and Vertex AI. That’s 1.7× cheaper than Claude Opus 4.7 ($5/$25) and meaningfully cheaper than GPT-5.4 ($3/$15). Volume discounts available via Vertex AI for enterprise tiers. Gemini Flash family is significantly cheaper still for non-flagship workloads.

When did Gemini 3.1 Pro launch?

February 19, 2026 — replacing Gemini 3.0 Pro from November 2025. The 3.1 update brought stronger reasoning, retained the 1M context, added native voice + video processing in the production API, and improved long-context instruction-following.

✅ What Gemini Wins At

- ✓ Native voice + video processing (only flagship)

- ✓ 1M context at GA standard pricing

- ✓ API price ($2/$12) — cheapest flagship

- ✓ Generous free tier in Gemini app

- ✓ Workspace + Vertex AI deep integration

❌ Where Gemini Falls Short

- ✗ Coding benchmarks trail Claude Opus 4.7 by 7 points

- ✗ MCP-Atlas tool use slightly behind Opus (73.9% vs 77.3%)

- ✗ Some Workspace integrations still surface-level, not agentic

- ✗ Antigravity IDE still maturing vs more established competitors

Class-leading on multimodal, price, and free-tier generosity. Tied with Claude Opus 4.7 on composite intelligence. Half a star off because the coding-benchmark gap is real and matters for engineering teams, and some Workspace integrations are less mature than Google’s marketing implies.

💡 Key Takeaway: Gemini in May 2026 is the cost-leader flagship that ties Claude on composite intelligence and uniquely wins on multimodal. The right default for most production work that isn’t coding-heavy. Pair with Claude Opus 4.7 if your stack also runs autonomous coding agents — together they cover the full flagship workload spectrum.

The Final Verdict

Google Gemini in May 2026 is the strongest the lineup has ever been and the most pragmatic flagship for the broadest set of workloads. The composite Intelligence Index ties it with Claude Opus 4.7 (57 = 57). The price is the cheapest in the flagship category by a meaningful margin. The native voice + video processing is genuinely unique. The free tier in the Gemini app is the most generous of the three flagships. For most non-coding production work, picking Google Gemini as your default flagship is the high-leverage choice.

The honest caveat: if your work is coding-heavy and reliability matters more than cost, Claude Opus 4.7’s 7-point SWE-bench lead is real and pays back the premium price. The realistic 2026 answer for serious teams is “use both, route by task” — Gemini for cost-sensitive and multimodal work, Claude for coding-heavy work, GPT for whatever your existing OpenAI integration covers. The “pick one flagship” framing is a 2024 question with a 2026 answer that’s evolved past it.

Related Reading

- Gemini Flash Review — the cheap-tier Gemini family below Pro

- Google AI Pro & Ultra Review — consumer subscription tiers bundling Gemini 3.1 Pro

- Claude Code vs Gemini CLI — terminal-agent head-to-head between the two ecosystems

- Nano Banana Pro Review — Google’s premium image generator inside the Gemini family

- Best AI Image Generators 2026 — where Nano Banana fits in the broader image-gen market

- ChatGPT Review — the OpenAI flagship in the Gemini / Claude / GPT triangle

- Claude Code Review — Anthropic’s CLI agent powered by Opus 4.7

- The Complete AI Tools Guide — buyer’s guide for picking the right tool from 200+ tested

Founder of AI Tool Analysis. Tests every tool personally so you don’t have to. Covering AI tools for 10,000+ professionals since 2025. See how we test →

Stay Updated on Gemini and Flagship LLMs

The flagship LLM category shifts every quarter. Subscribe for honest reviews of new Gemini / Claude / GPT releases, benchmark updates, and pricing changes — delivered every Thursday at 9 AM EST.

- ✅ Honest Reviews: We actually test these models, not rewrite press releases

- ✅ Model Releases: Major Gemini / Claude / GPT launches covered within days

- ✅ Pricing Changes: Know when API tiers shift

- ✅ Benchmark Updates: Side-by-side scoring as the field evolves

- ✅ No Hype: Just the AI news that matters for your work

Free, unsubscribe anytime. 10,000+ professionals trust us.

Last Updated: May 1, 2026

Model Tested: Google Gemini 3.1 Pro (released February 19, 2026), with comparison context to Gemini 3 Flash family, Nano Banana Pro / Nano Banana 2 image gen, Claude Opus 4.7 (Anthropic), and GPT-5.4 (OpenAI).

Slug Note: Renamed from /gemini-3-review/ to /gemini-review/ on May 1, 2026 for evergreen URL. 301 redirect in place. New slug ages cleanly across future Gemini cycles (3.5, 4, etc).

Next Review Update: August 2026 (or sooner when Google ships a major Gemini upgrade)

Have a tool you want us to review? Suggest it here | Questions? Contact us