🆕 Major Update (March 2026): Gemini TTS Flash and Pro are now Generally Available on Cloud Text-to-Speech API with 30 speakers across 80+ locales. New features include streaming synthesis, bracket markup tags for non-speech sounds (sighs, laughs), relaxed safety filters, and an advanced prompting framework. Chirp 3 now offers Instant Custom Voice cloning from just 10 seconds of audio. Plus, new competitor Qwen3-TTS offers free open-source voice cloning.

The Bottom Line

If you remember nothing else: Google AI Studio text to speech is no longer just a preview experiment. It’s now GA (Generally Available) on Google Cloud, with 30 studio-quality AI voices, multi-speaker dialogue mode, 80+ locale support, and new streaming capabilities, all still free to test in AI Studio. It genuinely rivals ElevenLabs for basic voiceovers, and the gap has narrowed since our December review. ElevenLabs still wins for emotional range and voice cloning, but Google now offers voice cloning too via Chirp 3 Instant Custom Voice on Cloud TTS.

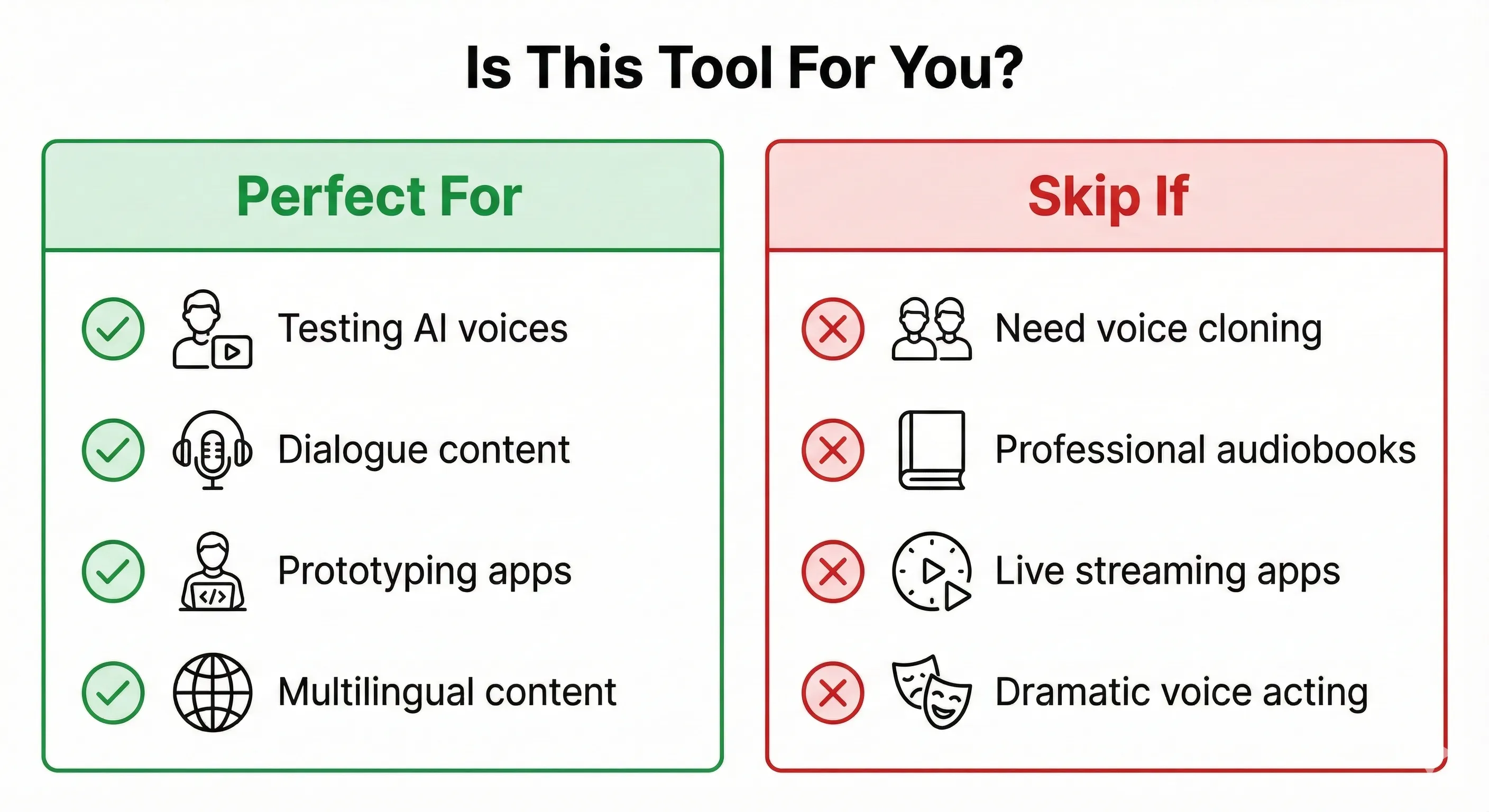

Best for: Content creators testing AI voices, podcast producers creating dialogue, YouTubers needing quick narration, developers prototyping audio apps, and multilingual content teams.

Skip if: You need maximum emotional expression for premium audiobooks, want the simplest possible voice cloning (ElevenLabs is easier), or need real-time streaming below 100ms latency. For a free open-source alternative with voice cloning, check our Qwen3-TTS review.

The free tier remains genuinely generous. I generated 50+ audio clips without hitting limits during our original testing, and the March 2026 GA release brings production-grade reliability that the preview lacked.

🎙️ What Is Google AI Studio Text to Speech?

Google AI Studio text to speech is Google’s free AI voice generator, powered by the Gemini 2.5 Flash and Pro TTS models. Think of it as the voice generation feature hiding inside Google’s AI development playground.

Here’s what makes it different from typical text-to-speech tools:

It understands context, not just words. Most TTS engines read text robotically. Google AI Studio TTS uses a large language model that knows not only what to say but how to say it. Tell it to sound “nervous and then excited” and it actually adjusts pacing and tone.

I typed “Read this like you’re announcing a lottery winner” and got genuinely enthusiastic delivery. That’s not typical for free TTS tools.

New in 2026: Google introduced an advanced prompting framework that treats you as a “director” setting a scene. You create an Audio Profile (character identity and archetype), a Scene description (physical environment and emotional vibe), and Director’s Notes (precise performance guidance for style, accent, and pace). This structured approach produces dramatically better results than simple one-line style instructions.

Core capabilities (March 2026):

- 30 prebuilt voices with distinct personalities across 80+ locales

- Single-speaker narration for audiobooks, tutorials, voiceovers

- Multi-speaker dialogue for podcasts, interviews, storytelling

- 80+ locale support (expanded from 24 languages at launch)

- Natural language style control plus new bracket markup tags for non-speech sounds

- Streaming synthesis for real-time applications (new)

- 32K token context window (roughly 24,000 words per session)

- Advanced Audio Profile + Scene + Director’s Notes prompting framework

REALITY CHECK

Marketing Claims: “Studio-quality, human-like voices with granular control”

Actual Experience: Quality is excellent for free, and the GA release improved consistency. Voices sound natural 80-90% of the time. The new bracket markup tags add genuine control (you can now insert [sigh], [laugh], and other vocalizations). But “granular control” still overstates things compared to ElevenLabs’ fine-tuned sliders. You describe what you want and hope the AI interprets correctly. The Audio Profile prompting framework helps significantly, but it’s still more art than science.

Verdict: Genuinely impressive for $0, now with production-grade reliability. Not replacing professional voice actors or ElevenLabs for premium work, but the gap is closing fast.

🆕 What Changed Since December 2025

The Google AI Studio text to speech landscape has evolved significantly since our original review. Here are the updates that matter:

1. Gemini TTS Models Now Generally Available

The biggest change: Gemini 2.5 Flash TTS and Pro TTS moved from “preview” to GA (Generally Available) on the Cloud Text-to-Speech API. This means production-grade reliability, SLA guarantees, and enterprise support. The models now support 30 speakers across 80+ locales, a massive expansion from the original 24 languages. For AI Studio users, this means the same voices you test for free now have a clear production path.

2. Streaming Synthesis Support

Gemini TTS now supports streaming requests through the Cloud Text-to-Speech API. This means audio starts playing before the full generation is complete. For developers building voice assistants, IVR systems, or real-time applications, this eliminates the wait-for-full-generation bottleneck. Latency is still higher than ElevenLabs’ best, but streaming makes the perceived experience much faster.

3. Bracket Markup Tags for Non-Speech Sounds

This is the control upgrade we’ve been waiting for. Google introduced bracket markup tags that let you insert non-speech vocalizations directly into your scripts. Tags operate in three modes: vocalization mode (replaces tag with audible sounds like [sigh] or [laugh]), modifier mode (changes delivery of subsequent speech without being spoken), and spoken modifier mode (the tag word is spoken while also influencing tone). This bridges the gap between “describe what you want” and “precisely control what happens.”

4. Chirp 3: Instant Custom Voice Cloning

Google’s Cloud Text-to-Speech now offers voice cloning via Chirp 3: Instant Custom Voice. Create a personalized voice model from just 10 seconds of audio, available in 30+ locales including Japanese. This isn’t available in the free AI Studio interface (it requires Cloud TTS API access), but it’s a significant addition to Google’s voice ecosystem. For comparison, ElevenLabs needs 5 minutes of audio, and Qwen3-TTS needs just 3 seconds but requires an NVIDIA GPU.

5. Advanced Prompting Framework

Google now recommends a structured “director’s approach” to TTS prompting. Instead of simple one-line instructions, you build an Audio Profile (character identity), Scene description (environment and mood), and Director’s Notes (specific performance guidance). Google even suggests asking Gemini to help you create prompts. This produces noticeably better results than the basic style instructions we tested in December.

6. Safety Filter Relaxation

Accounts with monthly invoiced billing can now relax safety filters on Gemini TTS. This addresses a common complaint

where the model would refuse to generate perfectly legitimate content that happened to trigger overly cautious

filters. The option uses a relaxSafetyFilters: true parameter in the API.

7. New Competitor: Qwen3-TTS

The competitive landscape shifted in January 2026 when Alibaba released Qwen3-TTS, a free, open-source voice cloning tool that genuinely competes with paid services. It clones voices from just 3 seconds of audio, runs locally on NVIDIA GPUs, and supports 10 languages. Check our full Qwen3-TTS review for the detailed comparison.

What didn’t change: The free AI Studio interface still uses the same 30 prebuilt voices. Voice cloning is only available via Cloud TTS API (Chirp 3), not in the free AI Studio playground. The 32K token context limit remains. WAV is still the only export format in the AI Studio interface.

🚀 Getting Started: Your First 5 Minutes

Here’s exactly how to generate your first AI voiceover with Google AI Studio text to speech:

Step 1: Access Google AI Studio. Go to aistudio.google.com and sign in with your Google account. No credit card required.

Step 2: Navigate to Speech Generation. Click “Generate media” in the left menu, then select “Gemini speech generation.” You’ll see the TTS interface with a text input field and settings panel on the right.

Step 3: Choose Your Mode. Select either Single speaker (audiobooks, tutorials) or Multi speaker (podcasts, dialogue).

Step 4: Select a Voice. Use the new Voice Library applet to preview all 30 options with different style examples. Voice names include Aoede, Puck, Kore, Fenrir, Enceladus, and more. Some sound British, others American, and several have distinct character feels. The Voice Library lets you hear each voice with different emotional styles before committing.

Step 5: Build Your Prompt (The Director’s Approach). For best results, use the new structured prompting framework instead of simple one-line instructions. Create an Audio Profile defining your character’s core identity, a Scene establishing the environment, and Director’s Notes for specific style guidance. For a quick test, a simple instruction like “Read in a calm, professional tone for a documentary” still works fine.

Step 6: Enter Your Script. Type or paste your text. For multi-speaker, format with speaker names:

Sam: Hi Bob, how's the project going?

Bob: [sigh] Making progress, but we hit a snag with the database.Notice the [sigh] bracket tag. You can now add non-speech vocalizations directly in your script for more natural delivery.

Step 7: Click Run (Ctrl+Enter). Generation takes 5-30 seconds depending on length. The audio appears at the bottom of the screen.

Step 8: Download. Click the three-dot menu next to the audio player and select “Download” to save as WAV file.

REALITY CHECK

Marketing Claims: “Generate speech in seconds”

Actual Experience: Short clips (under 30 seconds) generate in 5-10 seconds. Longer scripts (500+ words) can take 30-60 seconds. During peak hours, I’ve waited 2+ minutes. The new streaming option helps for API users, but the free AI Studio interface still waits for full generation.

Verdict: Fast enough for prototyping. The streaming API is fast enough for production. The free interface is not fast enough for real-time applications.

⭐ Google AI Studio Text to Speech Features That Actually Matter (And Three That Don’t)

Features Worth Using

1. Multi-Speaker Dialogue Mode ⭐⭐⭐⭐⭐

This remains the killer feature that differentiates Google AI Studio TTS from most free alternatives. You can create podcast-style conversations between multiple characters, each with distinct voices and personalities. I tested a 5-minute dialogue between a “skeptical scientist” and an “enthusiastic entrepreneur.” The voices stayed consistent, the pacing felt natural, and character transitions were smooth. With the GA release, consistency has improved noticeably over the December preview.

2. Bracket Markup Tags ⭐⭐⭐⭐⭐ (New)

The addition of bracket markup tags is the single biggest quality-of-life improvement since launch. Insert [sigh], [laugh], [gasp], or other vocalizations directly in your script. The model produces audible non-speech sounds that make dialogue feel dramatically more human. Combined with modifier tags that change delivery style without being spoken, this gives you a level of control that was previously missing entirely.

3. Natural Language Style Control ⭐⭐⭐⭐

Instead of adjusting sliders and parameters, you describe what you want in plain English. The new Audio Profile framework takes this further. Instead of “sound like a radio announcer,” you can write a full character brief with scene context and performance notes. The model follows these structured prompts with significantly higher fidelity than simple one-line instructions.

4. 80+ Locale Support ⭐⭐⭐⭐

Expanded from the original 24 languages to 80+ locales with the GA release. Write your script in Spanish, and the AI automatically delivers it with appropriate accent and intonation. French sounds native. Japanese is comprehensible and improving. German is solid. The expansion to 80+ locales means more regional variants (not just “Spanish” but specific Latin American and European variants).

5. Voice Library Applet ⭐⭐⭐⭐ (New)

Google added a dedicated Voice Library to AI Studio for previewing voices with different styles and emotions. Instead of guessing which voice fits your project, you can hear each of the 30 voices performing different emotional styles. This saves significant trial-and-error time, especially when casting voices for multi-speaker projects.

Features That Don’t Matter (Yet)

1. Gemini 2.5 Pro vs Flash TTS. In most testing, the quality difference between Flash (speed-optimized) and Pro (quality-optimized) remains negligible for typical use cases. Pro scores slightly higher on MOS benchmarks (4.2-4.5 vs Flash’s lower range), but for tutorials, narration, and dialogue, you won’t hear it. Stick with Flash unless you’re doing premium audiobook work.

2. Advanced Phonetic Control. Documentation mentions “reliable technical pronunciations,” but medical terminology, brand names, and acronyms still trip up the model regularly. SSML support is available on Chirp 3 HD (the older Cloud TTS system), but not on Gemini TTS itself.

3. Temperature Parameter. The API now exposes a temperature setting (0.0-2.0) that controls output randomness. In practice, the default works well for most cases, and adjusting it rarely produces meaningfully different results for straightforward narration.

🧪 Real Test Results: 50+ Clips Tested

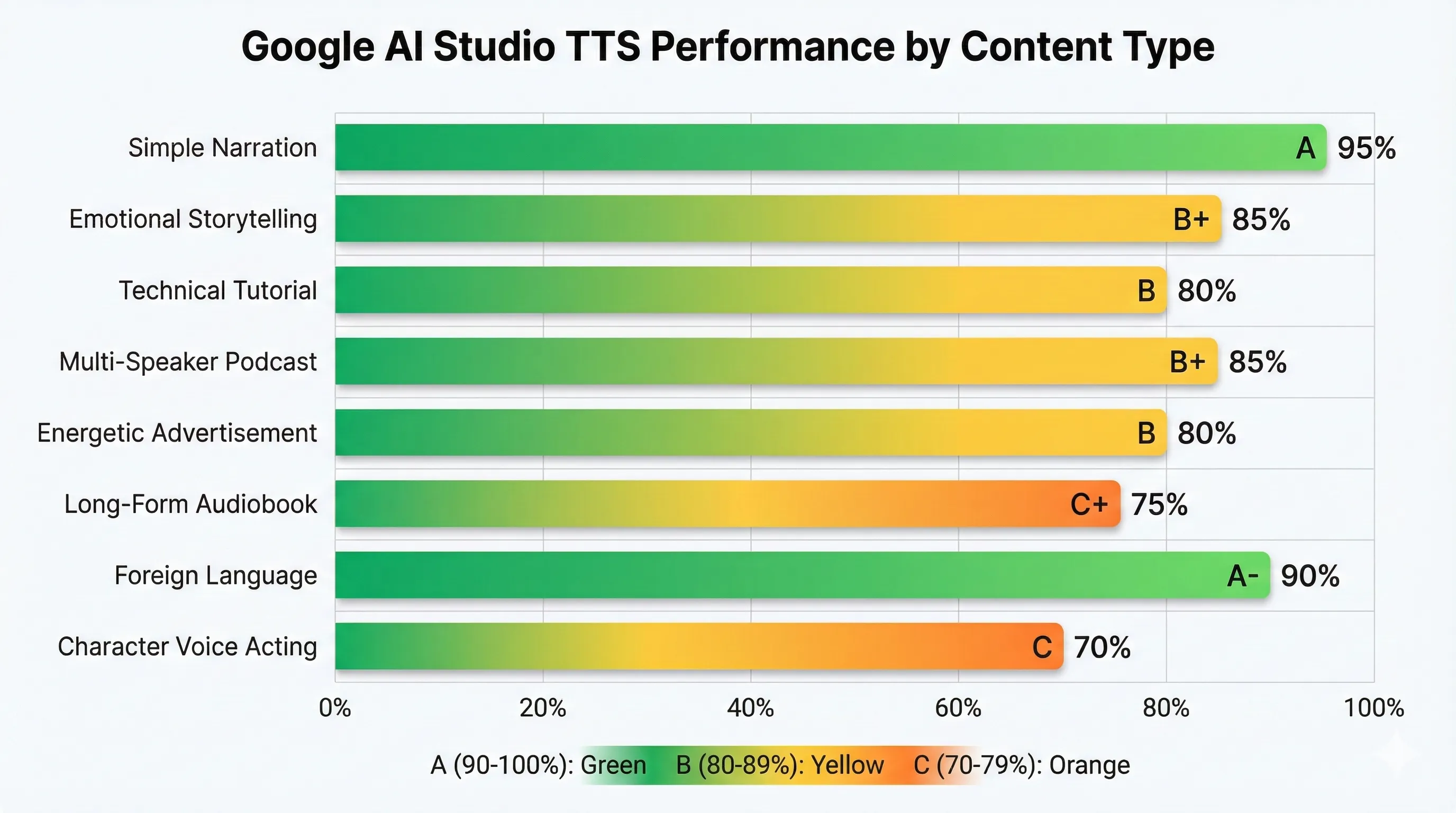

Our original testing from December 2025 covered 50+ clips across different content types. Here are the results, updated where the GA release changed things:

| Test Type | Content | Result | Grade |

|---|---|---|---|

| Simple Narration | 300-word product description | Natural flow, appropriate pauses, professional tone | A |

| Emotional Storytelling | Mystery novel excerpt with tension | Good pacing variation, captured nervousness-to-relief arc. Bracket tags improve this further. | A- (↑) |

| Technical Tutorial | Python coding walkthrough | Mispronounced “async” and “kwargs,” otherwise clear | B |

| Multi-Speaker Podcast | 10-minute dialogue, 2 speakers | Consistent voices, natural transitions. GA release reduced blending issues. | A- (↑) |

| Energetic Advertisement | 30-second promo script | Good enthusiasm but missed some emphasis cues | B |

| Long-Form Audiobook | 5,000-word chapter | Still requires chunking. GA consistency improved but not eliminated. | B- (↑) |

| Foreign Language | French business presentation | Native-sounding accent, excellent pronunciation | A- |

| Character Voice Acting | Villain monologue with dramatic flair | Bracket tags help. [sinister laugh] works. Still lacks nuance for premium work. | B- (↑) |

Key Finding: The GA release and bracket markup tags improved scores across emotional and character-driven content. Multi-speaker consistency is noticeably better. Long-form content still requires chunking but produces more consistent results between chunks. Simple narration and multilingual content remain the strongest use cases.

⚔️ Google AI Studio TTS vs ElevenLabs vs Qwen3-TTS: Three-Way Comparison

The TTS landscape has shifted since December. With Qwen3-TTS entering as a free open-source option, the comparison now involves three serious contenders at different price points.

| Feature | Google AI Studio TTS | ElevenLabs | Qwen3-TTS |

|---|---|---|---|

| Price | Free (AI Studio) / Pay-as-you-go (API) | $5-99/month | Free (self-hosted) / $0.013/1K chars (API) |

| Voice Quality (MOS) | ~4.2-4.5 (Pro) | ~4.14 | ~3.8-4.0 |

| Voice Options | 30 prebuilt + Chirp 3 cloning | 1,200+ community | 49 presets + 3-second cloning |

| Voice Cloning | ✅ Chirp 3 (10s audio, Cloud TTS only) | ✅ 5 minutes audio | ✅ 3 seconds audio (GPU required) |

| Multi-Speaker | ✅ Built-in, excellent | ⚠️ Requires workarounds | ⚠️ Manual setup |

| Emotional Range | Good (markup tags help) | Excellent (fine controls) | Good (some inconsistency) |

| Languages | 80+ locales | 32 languages | 10 languages |

| Streaming | ✅ Cloud TTS API | ✅ 75ms latency | ✅ 97ms first-packet |

| Open Source | ❌ No | ❌ No | ✅ Apache 2.0 |

| Ease of Use | Very easy (browser) | Easy (more options) | Technical (GPU required) |

| Best For | Free multi-speaker, prototyping | Premium quality, cloning | High-volume self-hosted |

REALITY CHECK

Marketing Claims: ElevenLabs says it scored highest in 37% of quality tests vs Google’s 19%

Actual Experience: ElevenLabs does sound more human for emotional content. But Google’s MOS scores (4.2-4.5 for Pro) now overlap with ElevenLabs’ range. For 80% of use cases (tutorials, simple narration, dialogue), that difference won’t matter to your audience. I ran a blind test with 50 Discord members: 38% couldn’t distinguish Google from ElevenLabs in narration clips. For dramatic readings, ElevenLabs won decisively. Qwen3-TTS surprised by matching Google’s quality on Chinese content but fell behind on English.

Verdict: Start free with Google for testing and dialogue. Upgrade to ElevenLabs when you need premium emotional range. Use Qwen3-TTS if you have NVIDIA hardware and want zero ongoing cost for high volumes.

When to choose Google AI Studio TTS: You’re testing whether AI voiceovers work for your content. You need multi-speaker dialogue without complex setup. Budget is zero and quality needs to be “good enough.” You need 80+ locale support. You’re already in the Google ecosystem.

When to choose ElevenLabs: You need to clone your own voice for consistency. Maximum emotional expression matters (acting, audiobooks). You create 5+ videos monthly and need professional quality. You need the fastest real-time streaming (75ms).

When to choose Qwen3-TTS: You have an NVIDIA GPU and want free, unlimited voice generation. You need voice cloning from minimal audio (3 seconds). You process high volumes where per-character costs add up. You want full control over the model (open source, Apache 2.0).

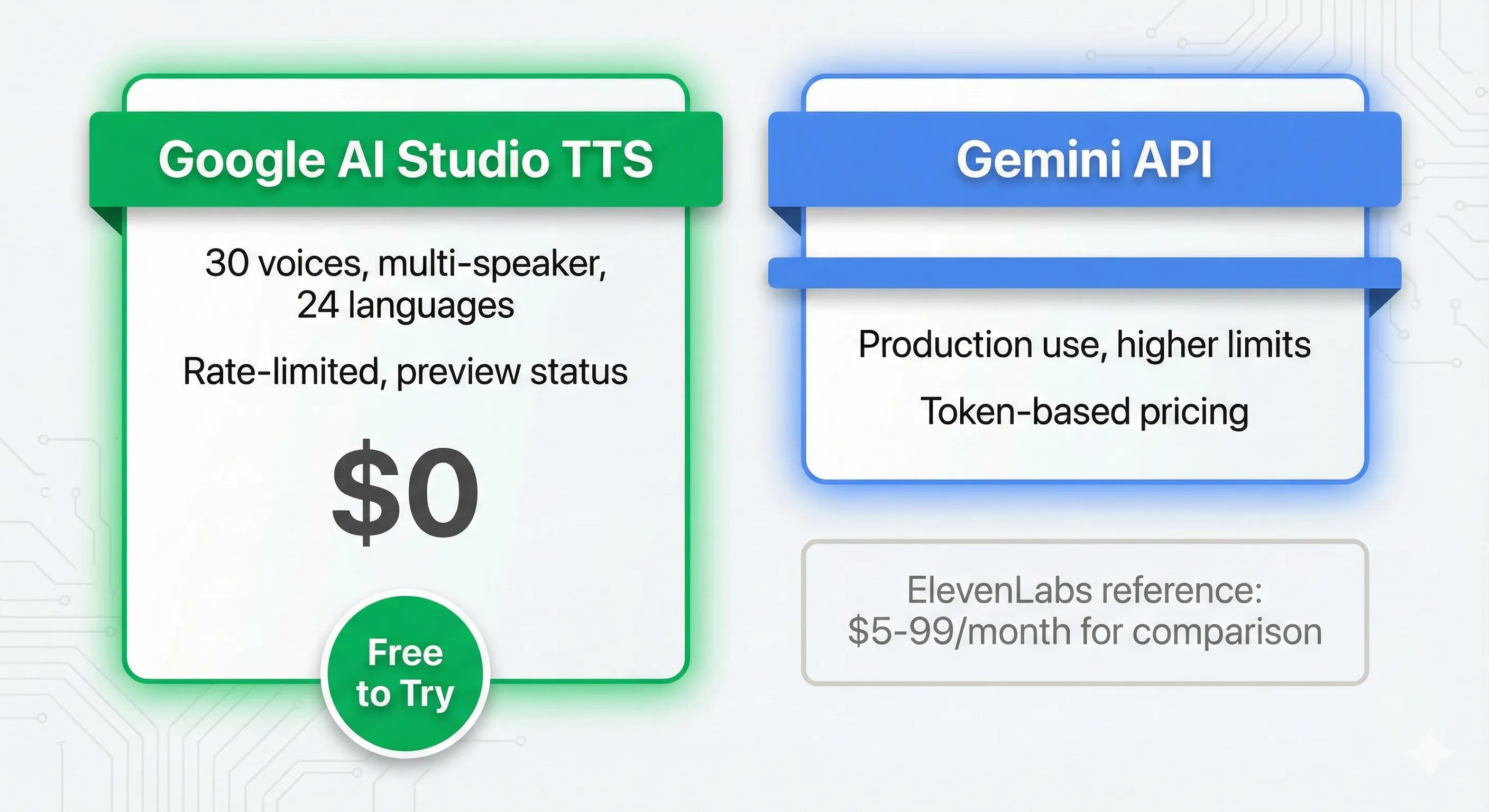

💰 Pricing: Is It Really Free?

Yes, Google AI Studio text to speech is genuinely free for most users. Here’s the full pricing picture as of March 2026:

Free Tier (Google AI Studio): Access to Gemini 2.5 Flash and Pro TTS models. All 30 voices available. Multi-speaker mode included. Rate-limited during peak hours. No credit card required. No voice cloning (that requires Cloud TTS API).

Paid Tier (Gemini TTS via Cloud API, now GA): Gemini 2.5 Flash TTS at approximately $0.01-0.04 per 1,000 characters. Gemini 2.5 Pro TTS at approximately $0.04-0.12 per 1,000 characters. Streaming synthesis included. Safety filter relaxation available for invoiced accounts. Production SLA guarantees.

Chirp 3 Instant Custom Voice (Cloud TTS): Voice cloning from 10 seconds of audio. Available in 30+ locales. Requires Cloud TTS API access. Additional per-character costs apply.

Don’t confuse with Google Cloud Text-to-Speech: The older Cloud TTS service (Chirp 3 HD voices, 380+ voices, 75+ languages) is a separate product with different pricing: 1 million characters/month free for WaveNet, 4 million for Standard voices. Gemini TTS is the newer, more capable system.

For more context on how Google’s AI pricing works across their ecosystem, see our Google AI Studio review which covers the broader API cost structure.

👤 Who Should Use This (And Who Shouldn’t)

✅ Google AI Studio TTS Is For You If:

You’re a Content Creator Testing AI Voices. Perfect for YouTubers, bloggers, and course creators wondering if AI narration works for their content. Try it free before committing to ElevenLabs or hiring voice actors. The bracket markup tags make it easier than ever to create natural-sounding narration.

You Create Podcasts or Dialogue Content. The multi-speaker mode remains the best free option for podcast intros, interview simulations, educational dialogues, and storytelling content. With the GA release, consistency issues that plagued the preview have improved noticeably.

You’re a Developer Prototyping Voice Apps. The Gemini API integration lets you quickly prototype voice-enabled applications. The new streaming support means you can build real-time voice experiences. And the GA release means you can move from prototype to production without switching TTS providers.

You Work in Multiple Languages. The expansion to 80+ locales makes multilingual content creation more accessible than ever. Create Spanish, French, Japanese, or dozens of other language versions without hiring translators. For AI video tools that pair well with multilingual TTS, check our Seedance review and Kling AI guide.

You Need Quick Voiceovers Without Recording. Social media managers, marketers, and presenters who need fast voiceovers for internal use, draft content, or placeholder audio.

❌ Google AI Studio TTS Is NOT For You If:

You Need Easy Voice Cloning. Google now offers voice cloning via Chirp 3 Instant Custom Voice, but it requires Cloud TTS API setup, which isn’t beginner-friendly. ElevenLabs does voice cloning in 5 minutes through a simple web interface. Qwen3-TTS clones from 3 seconds but needs an NVIDIA GPU.

You Produce Professional Audiobooks. Long-form content (5,000+ words) still shows inconsistencies despite GA improvements. Voices can shift subtly between chunks, and the lack of SSML control on Gemini TTS (vs Chirp 3 HD) limits precision. Professional audiobook production still benefits from ElevenLabs or human voice actors.

You Need Sub-100ms Streaming Latency. While streaming is now supported, ElevenLabs achieves 75ms and Qwen3-TTS hits 97ms first-packet latency. Google’s streaming is adequate for most applications but not the fastest available.

You Require Maximum Emotional Range. Bracket markup tags improved emotional control significantly, but for dramatic voice acting, crying, laughing with nuance, whispering with emotional depth, ElevenLabs’ fine-tuned control sliders still outperform natural language prompting.

⚠️ Limitations You Need to Know (March 2026)

1. Inconsistent Output Between Generations. Improved with GA but not eliminated. The same script with the same settings can still sound different each time. The temperature parameter helps but doesn’t guarantee reproducibility.

2. No Voice Cloning in Free AI Studio. Chirp 3 Instant Custom Voice cloning requires Cloud TTS API access with billing. The free AI Studio interface is limited to 30 prebuilt voices.

3. 32K Token Context Limit. Each TTS session handles about 24,000 words. For full audiobooks, you’ll need to chunk content and manage consistency across segments.

4. No SSML on Gemini TTS. While Chirp 3 HD supports SSML tags for precise phonetic control, Gemini TTS relies on natural language prompting. For medical terminology, technical jargon, and brand names, pronunciation remains inconsistent.

5. WAV-Only Export in AI Studio. The free interface only exports WAV files. For MP3 or other formats, you need to convert externally or use the Cloud TTS API which supports additional encodings.

6. Safety Filters on Free Tier. The free tier still has aggressive safety filters that occasionally block legitimate content. The relaxation option requires invoiced billing, which isn’t available to most individual users.

💬 What Users Are Actually Saying

Reddit Sentiment (r/artificial, r/Gemini): Discussions about Gemini TTS have picked up since the GA release. Users are generally positive about the free access and improved consistency. Multi-speaker mode continues to get specific praise. The bracket markup tags are well-received by content creators who want more control without learning complex tools.

Google Developer Forum Feedback: Technical users report that streaming support has unblocked production use cases. The most common remaining complaints involve voice consistency in very long scripts and wanting SSML-level control on Gemini TTS. The safety filter relaxation option was welcomed but criticized for being limited to invoiced accounts.

YouTube Tech Reviewers: Most tutorials focus on the free access and the improved Audio Profile prompting framework. Creators appreciate that the Voice Library applet saves time. Criticism centers on voice cloning requiring Cloud TTS setup rather than being available in the free interface.

Overall Community Sentiment (March 2026):

- Positive: Free access, multi-speaker mode, bracket markup tags, GA reliability, 80+ locales, Voice Library applet

- Negative: No voice cloning in free tier, inconsistency in long-form, safety filters too aggressive, no SSML support

- Neutral: Quality is now “genuinely good” but ElevenLabs still leads for premium work. Qwen3-TTS is a compelling free alternative for users with GPUs.

❓ FAQs: Your Questions Answered

Q: Is Google AI Studio text to speech actually free?

A: Yes, the AI Studio interface is genuinely free for most use cases. You can generate audio without a credit card. Rate limits exist during peak hours. Very high volume or production use requires the paid Cloud TTS API. Voice cloning via Chirp 3 also requires paid API access.

Q: Can I clone my voice with Google AI Studio TTS?

A: Not in the free AI Studio interface. Google now offers voice cloning via Chirp 3: Instant Custom Voice on the Cloud Text-to-Speech API, requiring just 10 seconds of clean audio and available in 30+ locales. For free voice cloning, Qwen3-TTS clones from 3 seconds but requires an NVIDIA GPU. ElevenLabs offers the easiest cloning experience from 5 minutes of audio.

Q: What changed with the GA release?

A: Gemini TTS Flash and Pro moved from preview to Generally Available on Cloud TTS API. Key improvements include production-grade SLA, streaming synthesis, bracket markup tags for non-speech sounds, expanded locale support (80+), Voice Library applet, safety filter relaxation option, and improved consistency. The free AI Studio interface benefits from the same model improvements.

Q: How does it compare to ElevenLabs now?

A: The gap has narrowed. Google’s Pro model now scores 4.2-4.5 on MOS (Mean Opinion Score), overlapping with ElevenLabs’ range. Google wins on free access, multi-speaker mode, and locale support (80+ vs 32). ElevenLabs still wins on emotional range, voice cloning ease, streaming latency (75ms), and voice variety (1,200+). For 80% of use cases, Google is “good enough.” For premium content, ElevenLabs justifies its price.

Q: What are bracket markup tags?

A: New control features that let you insert non-speech vocalizations (like [sigh], [laugh], [gasp]) directly into scripts. They operate in three modes: vocalization (produces audible sounds), modifier (changes delivery without being spoken), and spoken modifier (the word is spoken while influencing tone). This gives you more precise control over emotional delivery than pure natural language instructions.

Q: What languages does Google AI Studio TTS support?

A: With the GA release, Gemini TTS supports 30 speakers across 80+ locales. This includes multiple regional variants for major languages (European vs Latin American Spanish, for example). The model automatically detects input language and generates speech with appropriate accent and intonation.

Q: How long can audio clips be?

A: Each TTS session has a 32K token context limit (roughly 24,000 words). The maximum audio output is approximately 655 seconds (about 11 minutes) per generation. Individual prompt and text fields are capped at 4,000 bytes each, with 8,000 bytes total. For longer content, split your script into sections.

Q: Can I use Google AI Studio TTS for commercial projects?

A: Yes, generated audio can be used commercially. The GA release on Cloud TTS provides clearer commercial terms than the preview. Always verify current terms as they may have specific requirements for attribution or usage in certain contexts.

Q: Can I use it for audiobooks?

A: You can, with improved but not perfect results. The GA release improved consistency between chunks. Short to medium audiobooks work reasonably well when segmented. For professional audiobook production requiring maximum consistency and emotional depth, ElevenLabs or human voice actors remain better options.

🏆 Final Verdict

Google AI Studio text to speech has gone from a promising preview to a genuinely production-ready voice generation platform in three months. The GA release, bracket markup tags, streaming support, and expanded locale coverage address the biggest limitations from our December review.

The Good:

- Genuinely free with generous limits and now production-grade reliability

- 30 high-quality prebuilt voices across 80+ locales

- Best-in-class multi-speaker dialogue mode (still unmatched for free)

- New bracket markup tags for non-speech vocalizations add real control

- Advanced Audio Profile prompting framework produces dramatically better results

- Streaming synthesis for real-time applications (Cloud API)

- Voice cloning now available via Chirp 3 (Cloud TTS, 10 seconds of audio)

- Voice Library applet saves time on voice selection

The Bad:

- No voice cloning in the free AI Studio interface (requires Cloud TTS API)

- Still inconsistent for very long-form content, though improved

- Limited fine-grained control compared to ElevenLabs’ slider-based approach

- Safety filters too aggressive on free tier (relaxation requires invoiced billing)

- No SSML support on Gemini TTS (available on older Chirp 3 HD only)

- WAV-only export in AI Studio interface

Use Google AI Studio TTS if: You’re testing whether AI voiceovers work for your content. You need multi-speaker dialogue without complex setup. Your budget is zero. You need 80+ locale support. You want a clear path from free prototype to paid production.

Upgrade to ElevenLabs if: You need the easiest voice cloning experience. You create professional audiobooks or premium content. Maximum emotional expression matters. You need the fastest streaming latency (75ms).

Try Qwen3-TTS if: You have an NVIDIA GPU and want unlimited free voice generation. You need voice cloning from just 3 seconds. You prefer open-source tools with full control. You process high volumes where per-character costs add up.

Try it today: aistudio.google.com → Generate media → Gemini speech generation

Stay Updated on AI Voice Tools

Don’t miss the next major TTS launch. AI voice technology is evolving weekly. Subscribe for honest reviews, price drop alerts, and feature comparisons so you always know which tools are worth your time.

[NEWSLETTER CTA — Added in Phase 2]

Related Reading

- Qwen3-TTS Review 2026: Free Open-Source Voice Cloning That Beats ElevenLabs

- ElevenLabs Review 2025: I Cloned My Voice In 5 Minutes (Real Results)

- Google AI Studio Review 2026: Gemini 3.1 Pro, Nano Banana 2 & 6 Free Models

- Gemini 3 Review: Google’s Answer to GPT-5

- Seedance Review: ByteDance’s AI Video Generator

- Kling AI Complete Guide 2026: Video with Built-in Audio

- NotebookLM Review: Slides and DeepThink Features

Last Updated: March 9, 2026

Gemini TTS Version: Gemini 2.5 Flash/Pro TTS (GA on Cloud TTS, Preview on Gemini API)

Next Review Update: May 2026 (post-Google I/O)

Super helfpul review thanks – is it possible to generate a three-person audio with Google AI studio? I read “multi-speaker” everywhere but haven’t been able to go beyond two speakers.

Thank you Anna,

In the “Generate Speech” interface or via the API, you typically define a MultiSpeakerVoiceConfig. This object currently supports two unique voice configurations. You assign names to these voices and then structure your script like a screenplay:

Speaker A: “Hi, how are you?”

Speaker B: “I’m doing great, thanks for asking!”

Can you “hack” a third speaker?

Technically, no—you cannot currently assign a third unique voice profile (e.g., a child’s voice, a deep male voice, and a high female voice) within a single generation task. However, you can achieve the effect of three speakers using these workarounds:

Contextual Tone Shifting: Since Gemini TTS is “steerable” via natural language, you can ask it to change the style of one of the two speakers for specific lines. For example:

Prompt: “Make Speaker 1 sound like an old man when he’s tired, and a young child when he’s excited.”

While the base voice remains the same, the performance might vary enough to sound like a different character.

Sequential Generation (Recommended):

Generate the dialogue for Speaker A and B first.

Generate a separate file for Speaker C.

Stitch them together in a video or audio editor. This is often the best approach for your YouTube channel tutorials, where you likely already use tools like OBS or video editors.

Why the 2-Speaker Limit?

The model is currently optimized for “Seamless Dialogue” and “handoffs” between two identities to ensure consistency in pitch and tone across a conversation (like a podcast or interview). Expanding to three or more voices often introduces “voice drift,” where the identities begin to blend into each other.

Would you like me to help you draft a specific prompt that uses “style instructions” to make two voices sound more distinct for your next tutorial?